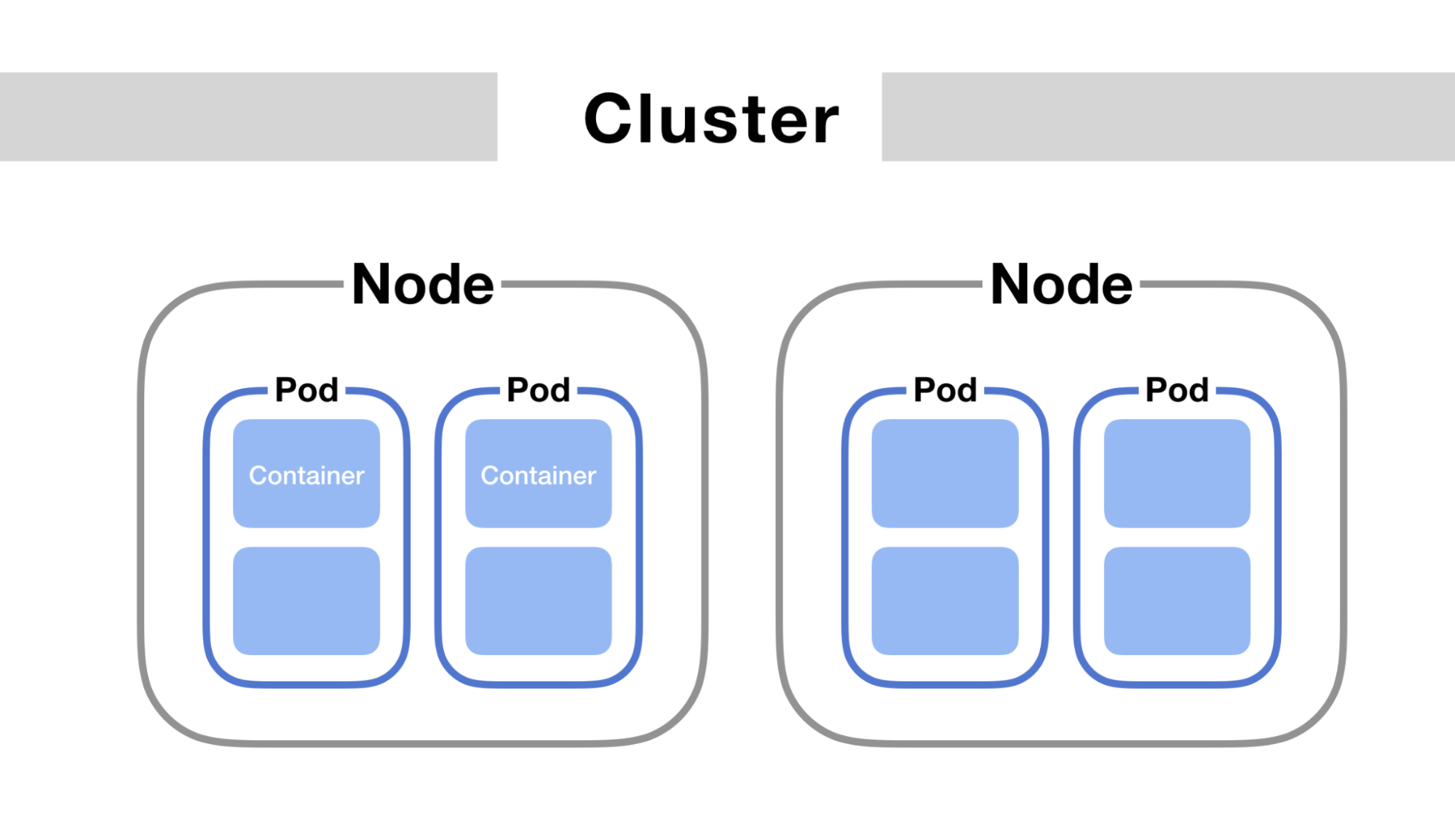

In Kubernetes, a set of machines for running containerized applications is called Cluster. A cluster contains a Control Plane and one or several Nodes.

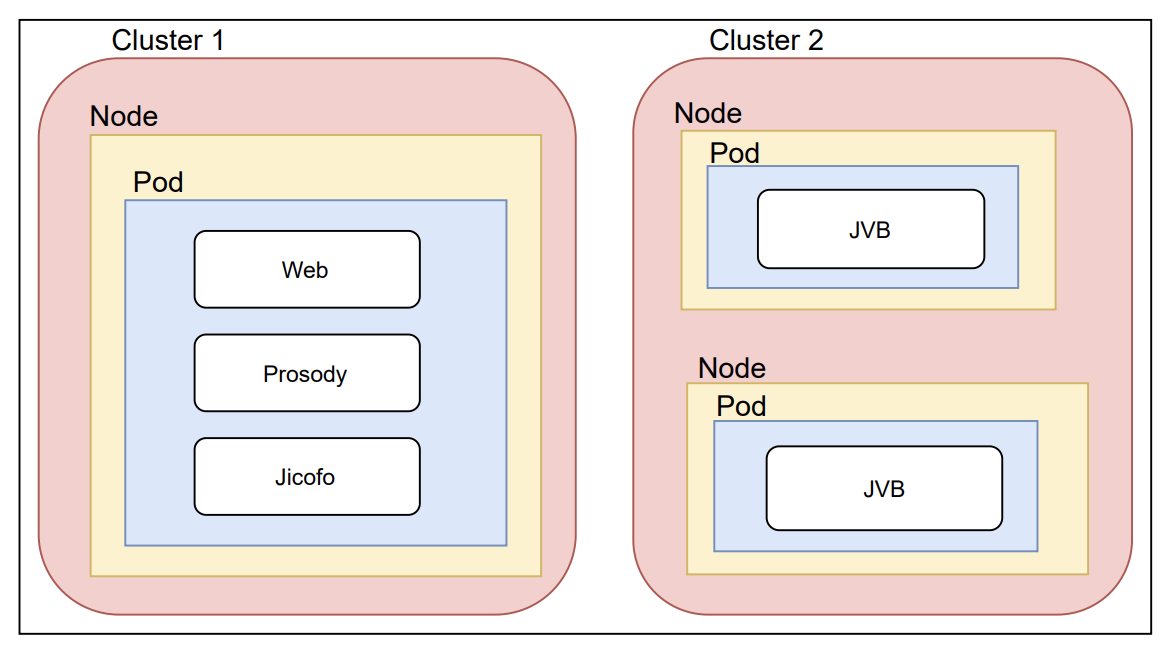

Jitsi meet architecture with Kubernates

Jitsi meet architecture with Kubernates

The control plane keeps the clusters in the desired state, such as which applications are running and which images are being used. The nodes, also known as Pods, are virtual or physical devices that run the applications and workloads. Containers that request computing resources such as CPU, Memory, or GPU consist of pods.

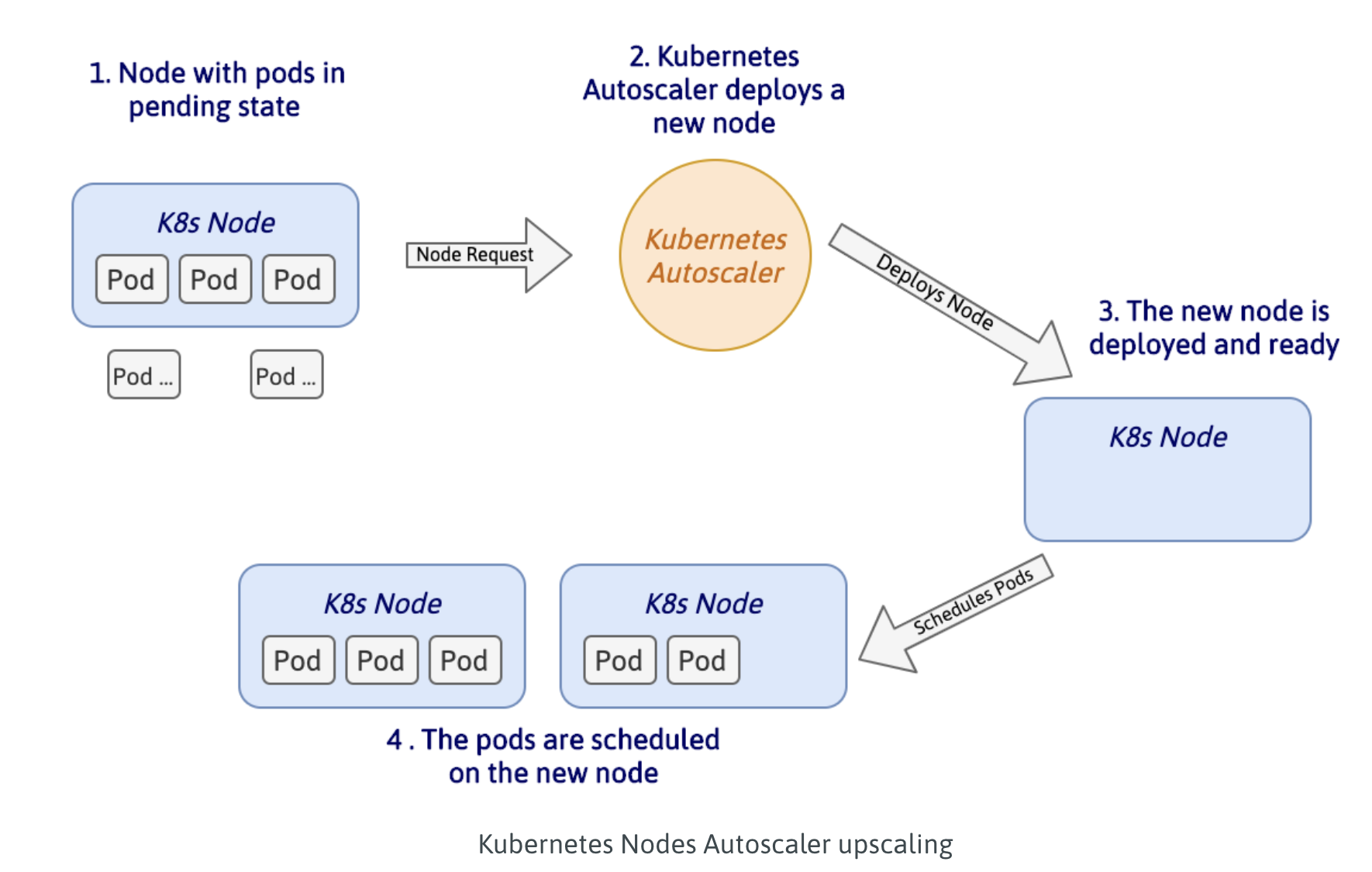

There are two types of scaling in Kubernetes

Horizontal scaling:Changing the compute resources of an existing cluster by adding new nodes or increasing the replicated count of pods, (Horizontal Pod Autoscaler)

The Horizontal Pod Autoscaler (HPA) will scale the number of pods in a cluster to meet an application’s current computational workload requirements. It calculates the number of pods needed based on metrics you specify, and then creates or deletes pods according to thresholds. The most common metrics are CPU and RAM consumption, but you can also specify your own custom metrics.

Vertical Scaling:Modify the attributed resources (like CPU or RAM) of each node in the cluster. Vertical scaling on pods means dynamically adjusting the resource requests and limits based on the current application requirements (Vertical Pod Autoscaler)

Meetrix also have commercial Google Cloud Platform (GCP), Microsoft Azure and AWS Kubernetes auto-scaling scripts for sale.

Looking for commercial support ? please contact us via hello@meetrix.io or the contact us

Leave a Comment